This post describes about how you can deploy and run a spring boot application on AWS EC2 instance.

Generate Spring Boot Application

1. Generate spring boot application from here. Provide Group and Artifact detail and choose Generate Project. Note that this project is generated as a maven project by default . So you must ensure to have Maven in your local machine to build this project.

2. When you choose Generate Project, a zip file is downloaded with name aws-ec2-springboot.zip. Extract this file and import this maven project to your Eclipse IDE.

3. Now create the HelloController.java in this spring boot project.

4. Open command prompt and go to the location where your project is imported. Now execute below command. This command builds and starts the spring boot application.

<YourDirectory>\aws-ec2-springboot>mvn clean install spring-boot:run

If you see below on your command prompt, this means your application is successfully started.

5. Try accessing application from your browser with below URL.

http://localhost:8080/

If this returns below page, your application is all set to be deployed on AWS EC2 instance.

Launch EC2 Instance

Now you need to launch EC2 instance on AWS. This post describes about how to launch a new Linux EC2 instance on AWS.

Java Installation on Launched EC2 Instance

To run a spring boot application on EC2 instance you need to make sure that java is installed on EC2 instance. Please refer this post to install java on linux EC2 instance.

Copy SpringBoot App to AWS EC2 Instance

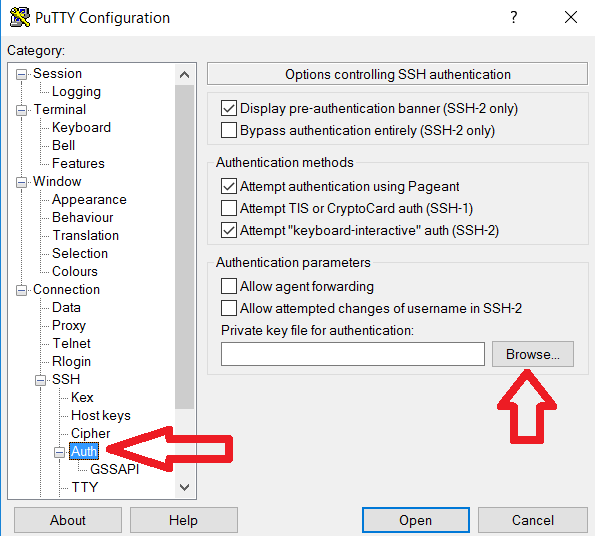

Here we will use WinSCP to copy spring boot app from your local machine to AWS remote EC2 machine. We need .ppk file to connect to remote EC2 using WinSCP client. If you do not have .ppk file generated, please refer this post to have .ppk file. Now open WinSCP, connect to remote EC2 machine and copy spring boot application.

Start and Access SpringBoot App

Access EC2 machine using Putty. This post describes about how to access Amazon EC2 Instance from Windows using Putty. Once you are able to access EC2 machine, you just need to execute below command. Since you have copied your spring boot application at location /home/ec2-user/aws-ec2-springboot-0.0.1-SNAPSHOT.jar, so make sure that you run below command from this location /home/ec2-user/

java -jar aws-ec2-springboot-0.0.1-SNAPSHOT.jar

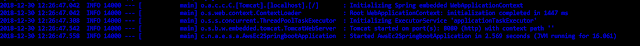

If you see below output, this means your spring boot application is successfully started on AWS EC2 instance.

Now finally use your EC2 IP to access web application on your browser.

http://<EC2-IP>:8080/

http://10.205.168.99:8080/

If it returns below output on browser, you are done with running spring boot application on AWS EC2 instance. Great Job !!.

Generate Spring Boot Application

1. Generate spring boot application from here. Provide Group and Artifact detail and choose Generate Project. Note that this project is generated as a maven project by default . So you must ensure to have Maven in your local machine to build this project.

2. When you choose Generate Project, a zip file is downloaded with name aws-ec2-springboot.zip. Extract this file and import this maven project to your Eclipse IDE.

3. Now create the HelloController.java in this spring boot project.

package

com.nv.aws.ec2.springboot.awsec2springboot;

import

org.springframework.web.bind.annotation.RequestMapping;

import

org.springframework.web.bind.annotation.RestController;

@RestController

public class HelloController {

@RequestMapping("/")

public String index() {

return "AWS EC2 Springboot - Greetings from Spring Boot on AWS

!!!";

}

}

|

<YourDirectory>\aws-ec2-springboot>mvn clean install spring-boot:run

If you see below on your command prompt, this means your application is successfully started.

5. Try accessing application from your browser with below URL.

http://localhost:8080/

If this returns below page, your application is all set to be deployed on AWS EC2 instance.

Launch EC2 Instance

Now you need to launch EC2 instance on AWS. This post describes about how to launch a new Linux EC2 instance on AWS.

Java Installation on Launched EC2 Instance

To run a spring boot application on EC2 instance you need to make sure that java is installed on EC2 instance. Please refer this post to install java on linux EC2 instance.

Copy SpringBoot App to AWS EC2 Instance

Here we will use WinSCP to copy spring boot app from your local machine to AWS remote EC2 machine. We need .ppk file to connect to remote EC2 using WinSCP client. If you do not have .ppk file generated, please refer this post to have .ppk file. Now open WinSCP, connect to remote EC2 machine and copy spring boot application.

Start and Access SpringBoot App

Access EC2 machine using Putty. This post describes about how to access Amazon EC2 Instance from Windows using Putty. Once you are able to access EC2 machine, you just need to execute below command. Since you have copied your spring boot application at location /home/ec2-user/aws-ec2-springboot-0.0.1-SNAPSHOT.jar, so make sure that you run below command from this location /home/ec2-user/

java -jar aws-ec2-springboot-0.0.1-SNAPSHOT.jar

If you see below output, this means your spring boot application is successfully started on AWS EC2 instance.

Now finally use your EC2 IP to access web application on your browser.

http://<EC2-IP>:8080/

http://10.205.168.99:8080/

If it returns below output on browser, you are done with running spring boot application on AWS EC2 instance. Great Job !!.